Few-Step Diffusion Language Models via Trajectory Self-Distillation

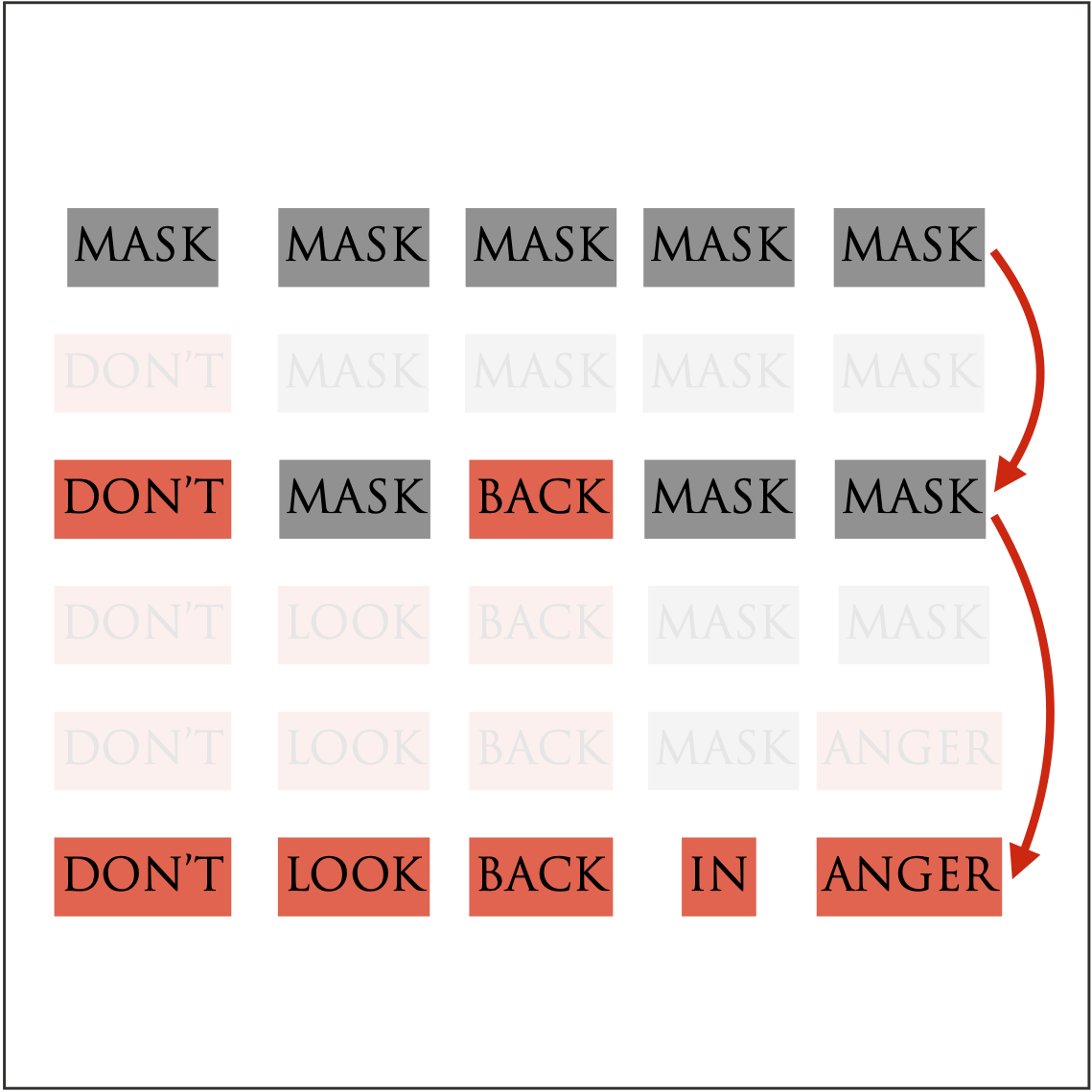

T3D (Trajectory Self-Distillation via DDO) is a self-distillation framework for diffusion large language models (DLLMs) that trains a few-step student by matching the teacher’s generation trajectories. We show theoretically that trajectory-level distillation enables few-step decoding by reducing factorization error, and empirically that T3D consistently outperforms existing few-step decoding baselines.

Arxiv Code Slides DIFFUSION LANGUAGE MODEL FEW-STEP GENERATION